Programming Parallel Computers

Version 4: Vectorized memory access [advanced]

In the analysis of the V3 kernel, we found that we introduced two new bottlenecks: MIO Throttle and Barrier stalls. We will now turn to eliminating the first of those.

Vector types in CUDA

The largest size of a memory request that a thread can send out is 128 bits, yet by loading individual floats we only request 32 bits each time. This is not as bad as it initially sounds, because the memory controller will coalesce the requests of multiple threads within one warp together.

Still, generating a large number of memory accesses does not come for free. Addresses need to be calculated, and issuing a load instruction does take up one issue slot that could be used for actual useful work. However, most importantly, if we use wider loads, we can request a larger amount of data to be read from shared memory with the same number of requests in the queue, thus effectively preventing the queue from overflowing and our warps from stalling. How can we make use of these wider loads, then?

To handle vectorized data loading, the CUDA compiler provides a predefined float4 type. You can actually go into

the CUDA headers and look up its definition, which should look something like this:

struct __device_builtin__ __builtin_align__(16) float4 {

float x, y, z, w;

};

This also suggests a major pitfall when working with vector loads. In order to be able to use wide load instructions, the target address must be aligned to a multiple of the load size, that is, 128 bits, or 16 bytes. Contrary to the CPU case, there are no unaligned loads on the GPU; attempting to load from a misaligned address will crash the kernel.

Fortunately, alignment is also a bit more predictable in CUDA, as cudaMalloc guarantees to return a well-aligned

address, so if one is careful in tracking all offsets, there should not be any alignment problems.

It is important to stress that float4 only optimizes interactions with the memory system. There is no SIMD

capability: for example, adding two float4s will still require a sequence of 4 scalar additions.

Nor are there vector registers; float4 will just occupy four regular 32-bit registers.

Vectorizing V2

Let us first consider the task of vectorizing V2. In the scalar version, data is loaded by having each thread issue 8 load instructions, with a stride of 8 elements. This means that each warp reads 32 bytes per instruction, and each individual float is broadcast to four different threads.

for (int ib = 0; ib < 8; ++ib) {

int i = ic * 64 + ib * 8 + ia;

x[ib] = t[nn*k + i];

}

If we want to use vectorized load instructions, we instead require each thread to process four consecutive elements.

With d and t now pointers of type float4*, strides need to be divided by four to access the same

elements as before. For convenience, we unpack the retrieved vectors into a single array (to be kept in registers), which means that we don't have to

change the main computation loop.

float x[8];

for (int ib = 0; ib < 8; ib += 4) {

float4 v = t[nn/4 * k + (ic * 8 + ib) * 2 + ia];

x[ib+0] = v.x;

x[ib+1] = v.y;

x[ib+2] = v.z;

x[ib+3] = v.w;

}

However, by changing the access pattern, we have changed which results within the 64×64 tile are calculated by which thread, so we need to adjust the logic that writes the result back to main memory. Writing down the correct indexing expression is left as an exercise to the reader.

Let us take a look at the statistics of this new implementation. As expected, the number of load instructions has been reduced by a factor of four, to only twice the number in the shared-memory solution.

| Metric | V0 | V1 | V2 | V3 | V2v |

|---|---|---|---|---|---|

| DRAM reads | 60 GB | 127 GB | 17 GB | 16 GB | 35 GB |

| DRAM bandwidth utilization | 4% | 24% | 21% | 25% | 41% |

| L2 reads | 155 GB | 455 GB | 27 GB | 28 GB | 65 GB |

| L1 hit rate | 96% | 56% | 54% | 7% | 29% |

| L1 read requests | 15638 | 15638 | 1976 | 247 | 988 |

| L1 write requests | 1.2 | 1.2 | 1.2 | 1.2 | 1.2 |

| Instructions | 42091 MInst | 42091 MInst | 18406 MInst | 19239 MInst | 19027 MInst |

| Duration | 3763 ms | 1389 ms | 208 ms | 168 ms | 220 ms |

Despite the drastic reduction in load instructions, we do not see a wall-clock speedup. Why is that?

The main reason is that the V2 implementation was bound by memory latency (Long Scoreboard stalls), not by bandwidth or too many instructions (LG Throttle stalls). The additional loads in V2 execute in parallel, and are likely to be serviced from caches as they exhibit high spatial locality, so they do not add to the overall latency.

This changes once we consider V3 as the baseline. In our profiling, we saw MIO Throttle as a significant contributor to warp stalls. Such a stall is an indication that we have exhausted the parallelism that is available for shared memory. Additionally, V3 has sufficient data reuse to hide memory latency; thus, a reduction in the number of instructions to be executed has the potential to reduce overall time.

Combining vectorization and shared memory

In the shared memory implementation V3, there are three types of memory access: first, transferring from global to shared memory; then reading from shared memory during the main computation; and finally writing back the results. All of these can be vectorized.

Data loading from global memory is handled by the following code in V3:

int ija = ja * 8 + ia;

int i = ic * 64 + ija;

int j = jc * 64 + ija;

for (int f = 0; f < 4; ++f) {

int k = ks + f;

xx[f][ija] = t[nn*k + i];

yy[f][ija] = d[nn*k + j];

}

Here, we combine the ia and ja indices into a single linear index to ensure that each warp executes perfectly coalesced reads.

But if each thread directly loads four elements, just 16 threads are sufficient to load the entire row of 64 numbers that we got before, leaving 3/4 of our threads idle.

These threads could instead already handle the data that ordinarily would only be requested in the next iteration of the f loop, that is,

threads 0 to 15 handle the f=0 case, threads 16 to 31 handle f=1, and so on. With 64 threads, we perfectly cover what used to be a loop

with a single instruction per thread:

int lin_vec_index = (ja * 8 + ia);

int ija = lin_vec_index % 16;

int f = lin_vec_index / 16;

int i = ic * 16 + ija;

int j = jc * 16 + ija;

xx[f][ija] = t[nn/4 * (ks + f) + i];

yy[f][ija] = d[nn/4 * (ks + f) + j];

The memory access pattern now looks like this:

The animation above illustrates what happens in the first block of threads for the first three iterations of the loop:

- Orange: which elements are accessed by the first thread.

- Blue: which elements are accessed by the first warp of threads.

- Black: which elements are accessed by the first block of threads (assuming that they are executing in a synchronized manner, which is not necessarily the case).

- Top: array

d. - Bottom: array

t.

Using wider stores to save the result is a bit trickier, because currently, we allocate n * n elements for the result array, which means that

individual rows in that matrix may start with a misaligned address. However, if we increase the size of that array with padding, then

we cannot simply cudaMemcpy it into the CPU-side result pointer.

We can solve this by switching to cudaMemcpy2D, which allows us to specify a different stride between

rows in the host and device arrays, like this:

CHECK(cudaMemcpy2D(r, n * sizeof(float),

rGPU, nn * sizeof(float),

n * sizeof(float), n, cudaMemcpyDeviceToHost));

Finally, to give the optimizer a better idea of how to use the available registers optimally, we add a __launch_bounds__(8*8)

annotation. This is our promise to the compiler that we will never call this kernel with a block size that exceeds 64.

Visualization

This results in the following memory access and computation pattern, scaled down to 6 by 6 as if we were using warps of 24 instead of 32 threads.

- Orange: which elements are accessed by the first thread.

- Blue: which elements are accessed by the first warp of threads.

- Black: which elements are accessed by the first block of threads (assuming that they are executing in a synchronized manner, which is not necessarily the case).

- Top: shared memory

xx. - Middle: shared memory

yy. - Bottom: registers

v.

Results

Our interactions with the memory system now look very good: we are still using only about 31% of the available memory bandwidth, and we managed to reduce the number of instructions needed for memory interactions by a factor of four.

| Metric | V0 | V1 | V2 | V3 | V4 |

|---|---|---|---|---|---|

| DRAM reads | 60 GB | 127 GB | 17 GB | 16 GB | 16 GB |

| DRAM bandwidth utilization | 4% | 24% | 21% | 25% | 31% |

| DRAM writes | 191 MB | 172 MB | 161 MB | 161 MB | 153 MB |

| DRAM write bandwidth utilization | 0.01% | 0.03% | 0.2% | 0.2% | 0.3% |

| L2 reads | 155 GB | 455 GB | 27 GB | 28 GB | 28 GB |

| L2 writes | 518 MB | 189 MB | 263 MB | 250 MB | 153 MB |

| L1 hit rate | 96% | 56% | 54% | 7% | 4% |

| L1 read requests | 15638 | 15638 | 1976 | 247 | 62 |

| L1 write requests | 1.2 | 1.2 | 1.2 | 1.2 | 0.3 |

| Shared memory reads | 0.00 | 0.00 | 0.00 | 1976 | 494 |

| Shared memory writes | 0.00 | 0.00 | 0.00 | 247 | 62 |

We also see that the amount of data written back to L2 has been reduced. This is because, by padding the result buffer, we ensured that all our write operations are aligned at a cache-line boundary, and each warp always writes a full cache line.

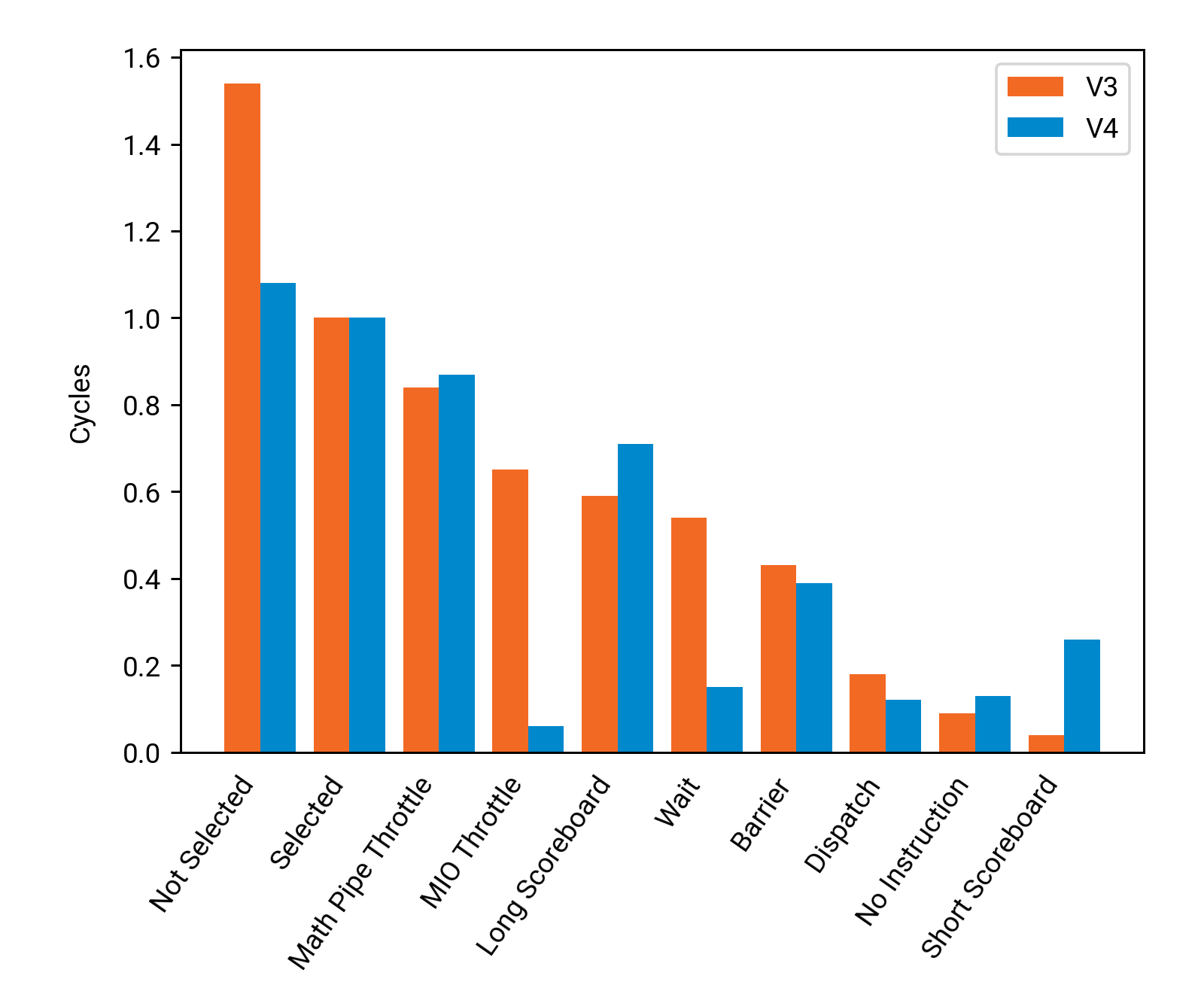

The warp stall statistics look similar to V3 overall, but most of the MIO Throttle stalls are gone.

We also see a marked reduction in Not Selected. This is because we now need even more registers, 128 to be exact. That leaves us with 16 active warps; yet these warps are so efficient that at least one is eligible per scheduler 84% of the time, up from 80% in V3.

A new type of warp stall also appears: Short Scoreboard. In contrast to Long Scoreboard, which means that the instruction is waiting for data to arrive from global memory, Short Scoreboard indicates waiting on shared memory. We are now fast enough that the latency of shared memory starts to matter!

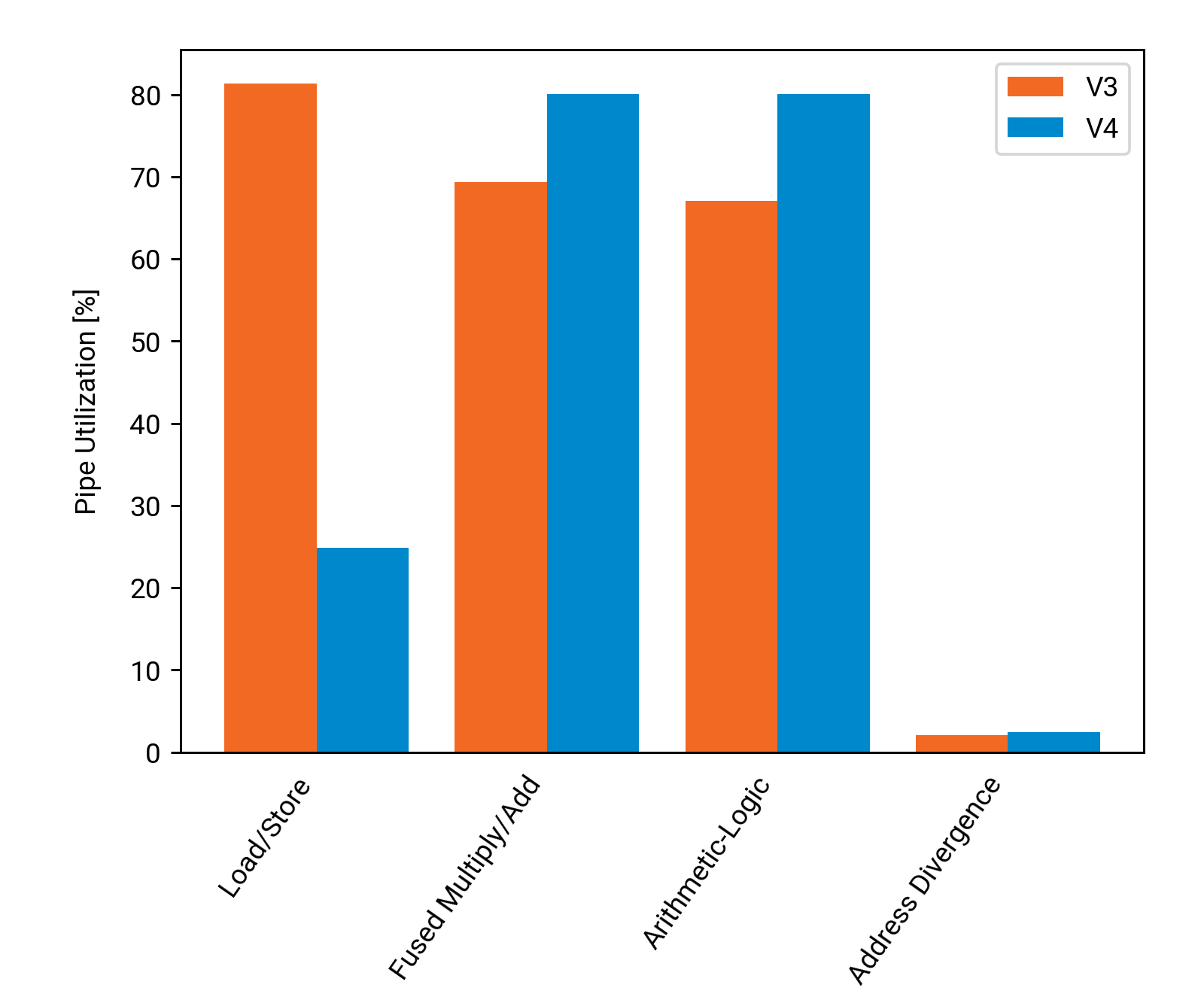

We can also look at how much we utilize different parts of the GPU, compared to version 3:

Unsurprisingly, we see a massive drop in LSU utilization, from running almost at maximum capacity to only being used lightly.

This in turn frees up capacity to increase usage of FMA units (for addition) and ALU units (for minimum). The last pipeline to have

any noteworthy use is the ADU, which is handling the __syncthreads() barriers.

Finally, here is a summary of how much progress we made compared to the initial version, and how certain key properties of the kernel changed:

| Metric | V0 | V1 | V2 | V3 | V4 |

|---|---|---|---|---|---|

| Registers per thread | 50 | 50 | 96 | 96 | 128 |

| Active Warps | 32 | 32 | 20 | 20 | 16 |

| Warp Latency | 103 | 38 | 8 | 6 | 5 |

| Warp Eligibility | 8% | 21% | 61% | 80% | 84% |

| Instructions | 42091 MInst | 42091 MInst | 18406 MInst | 19239 MInst | 16643 MInst |

| Cycles | 544694 M | 200952 M | 30317 M | 24219 M | 19944 M |

| Duration | 3763 ms | 1389 ms | 208 ms | 168 ms | 137 ms |

| Efficiency | 4 % | 10 % | 57 % | 67 % | 78 % |

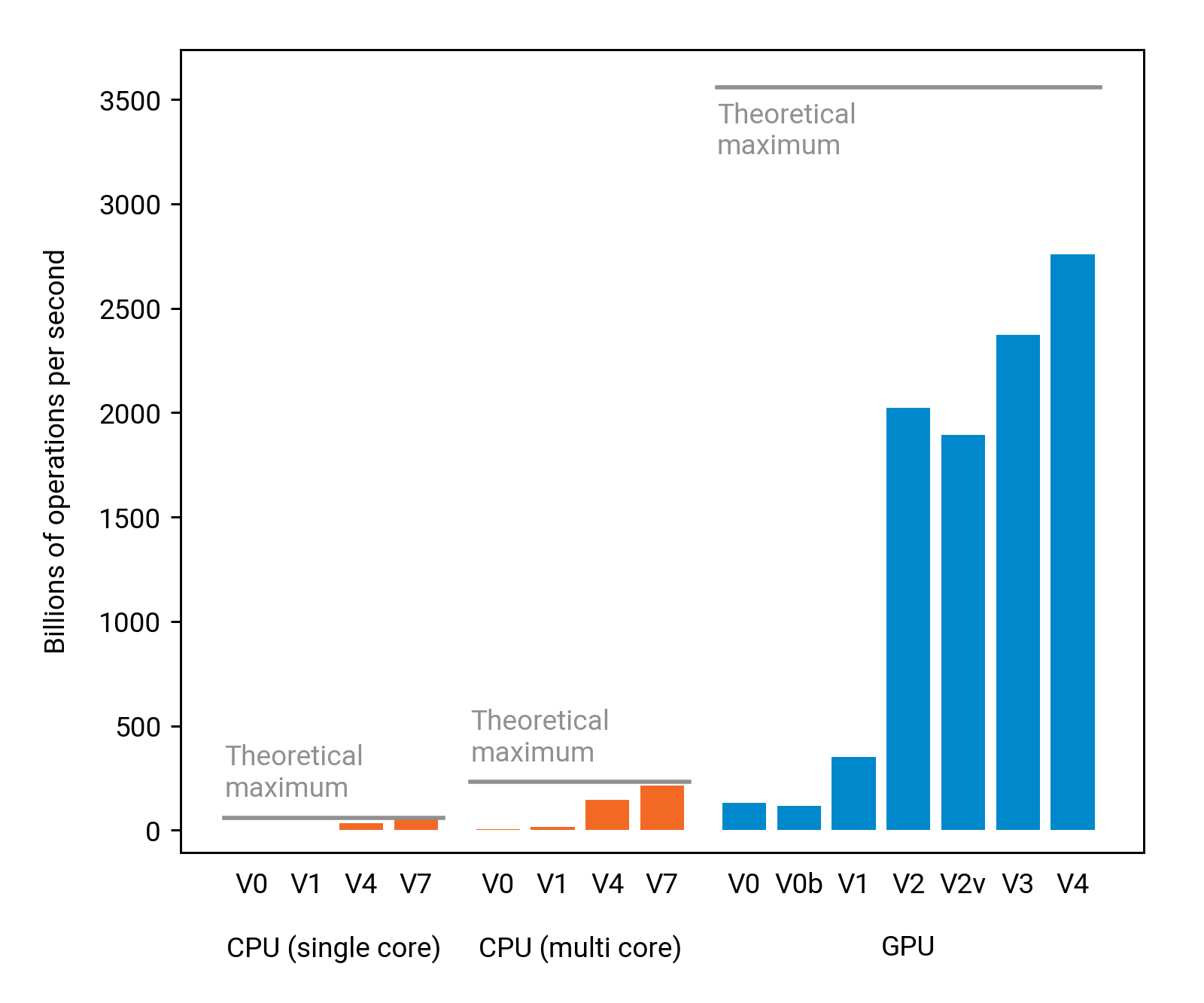

With the help of vectorized loads, we improved the running time slightly, from 211 ms to 181 ms. We are now using 78 % of the GPU's theoretical maximum performance, and we are doing much better than any of the CPU solutions on the same computer:

Concluding remarks

We are now at a point where the total running time is 181 ms, of which only 137 ms are actually spent inside the kernel.

Better efficiency could be achieved either by weak scaling (i.e., running on a larger problem), or by starting to overlap the kernel execution with the CPU-GPU data transfers. This exploration is left to the reader.